Financial services firms are racing to integrate runtime observability into their AI deployments as regulators, internal auditors, and cybersecurity insurers increasingly treat real-time governance of autonomous agents as non‑negotiable. Institutions using generative AI for core functions such as fraud detection, credit underwriting, trade surveillance, and claims processing are discovering that without continuous logging, decision lineage, and executive control over agent behavior, audit teams cannot attest to process integrity or compliance. This shift toward runtime security parallels the evolution from perimeter firewalls to modern SOC pipelines, but with much higher scrutiny—and enterprise AI now firmly in the spotlight.

Palo Alto Networks Inc. (NASDAQ: PANW), Microsoft Corporation (NASDAQ: MSFT), International Business Machines Corporation (NYSE: IBM) and CrowdStrike Holdings, Inc. (NASDAQ: CRWD) are leading vendors in this convergence of AI, compliance, and runtime governance. With critical regulations such as Basel III, PCI‑DSS, SOX, GDPR, and the EU Artificial Intelligence Act pointing to the need for real-time model monitoring, financial services leaders are accelerating deployments of systems like Prisma AIRS, Sentinel telemetry extensions, and Watsonx.governance. This trend has also attracted institutional investors, as runtime security becomes part of capital risk evaluations and cyber insurance eligibility.

Why are banks and insurers treating runtime AI agent logging and control as audit and compliance functions that go beyond cybersecurity?

In regulated environments, supervisory and audit frameworks operate on the assumption that any software influencing financial flows or personal data must produce verifiable evidence chains. This is why Basel III requires loss estimation and scenario testing, PCI‑DSS demands transaction integrity logging, and SOX insists on access controls and auditability. When an autonomous AI agent handles KYC decisions, fraud scoring, or portfolio analysis, runtime logging is needed to ensure each step can be reviewed and validated.

For example, a U.S. regional bank halted deployment of a generative AI assistant for credit approval when its internal audit team flagged prompt drift and unverifiable decision paths. Only after deploying Prisma AIRS—with per‑prompt hashing, session memory logs, and automated policy rollback—could compliance sign off. Risk teams now expect similar controls for every AI system touching regulated workflows, insisting that runtime observability is built into the procurement process.

Insurers are likewise incorporating runtime agent governance into underwriting criteria. A large European insurance provider recently refused to extend cyber coverage for a fintech client that lacked runtime telemetry, citing unquantifiable inference risk. Procurement managers confirm that runtime visibility is now frequently listed as a mandatory feature in Request for Proposals, elevating it to a gating criterion for AI-service deals in the financial sector.

How are leading players like Palo Alto Networks, Microsoft, IBM and CrowdStrike shaping runtime observability tools tailored for finance?

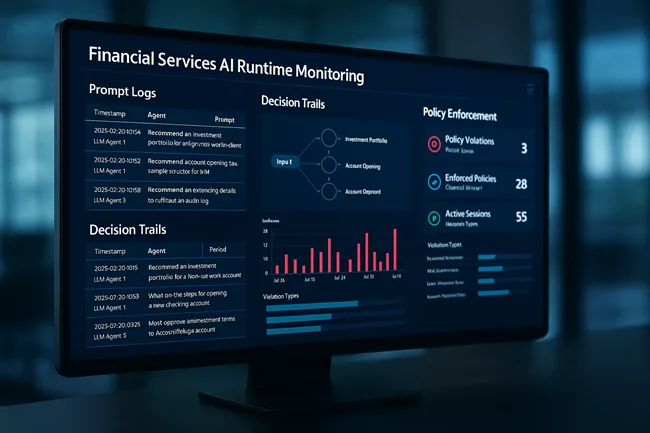

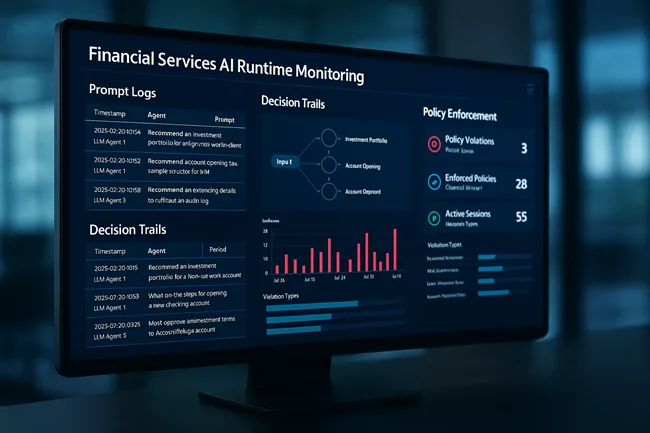

Palo Alto Networks’ Prisma AIRS has rapidly become a key building block for agentic AI oversight. Its integration with Cortex XSIAM and Prisma Cloud enables monitoring of prompt text, memory state, API calls, and policy violations in real time. The vendor reports that in early 2025, 40 percent of its top 100 banking clients had adopted AI runtime controls as part of broader AI resilience initiatives. While offering granular enforcement, Prisma AIRS also supports manual review workflows, allowing audit teams to flag suspicious prompt behavior and adjust risk thresholds via executive dashboards.

Microsoft is embedding runtime observability within its cloud stack. Its Responsible AI dashboard and Security Copilot now allow agents deployed through Azure AI Studio to feed real‑time telemetry into Sentinel. Financial services customers using Copilot for Finance, Fraud Insights, and Trade Advisor can see agent activity logs alongside cloud identity events, enabling SOC and compliance teams to correlate behavior with policy exceptions. A Microsoft spokesperson noted that this has already accelerated underwriting cycles in fintech startups by reducing policy review times from days to hours.

IBM brings Watsonx.governance to compliance-oriented fintech and banking environments. The platform supports runtime logging for model drift, fairness scoring, token-level prompt tracking, and lineage mapping. Financial users report the ability to integrate Watsonx logs directly into controls for MiFID II, FINRA, GDPR, and internal model risk frameworks. According to IBM’s Q2 FY25 financial disclosures, investment in Watsonx runtime observability has been a strong driver of product engagements, especially in Europe and the Middle East, where regional AI regulatory drafts are already demanding runtime auditing of autonomous systems.

CrowdStrike also offers runtime agent logging capabilities via its Falcon X platform, incorporating behavior telemetry from AI tools used in fraud detection, cybersecurity triage, and operational automation. Though less specialized than Prisma AIRS or Watsonx, Falcon X excels in holistic visibility—particularly in hybrid environments with multiple enforcement layers. Financial institutions using Falcon have reported up to a 30‑minute reduction in mean‑time‑to‑detect (MTTD) for AI‑agent anomalies.

What supervisory frameworks and auditor expectations are emerging around AI runtime security for financial services by 2026?

Regulatory bodies are formalizing runtime observability expectations for AI systems with financial and identity implications. The UK Financial Conduct Authority (FCA) and the Office of the Comptroller of the Currency (OCC) in the U.S. have outlined expectations in recent supervisory guidance, stating that institutions must monitor model behavior continuously post‑deployment and feed findings into governance dashboards. The EU AI Act calls for “log recording of automatic decision‑making processes,” which is widely interpreted to include agentic AI systems used in credit, insurance, or investment advice.

Enterprise auditors echo these expectations. Internal audit departments increasingly integrate runtime figures into quarterly AI risk reviews, checking for anomalies, drift, policy violations, or “hallucination incidents.” They expect runtime logs to be accessible via SIEM, GRC, or risk dashboards, and to support forensic analysis in the event of an incident. Firms unable to demonstrate runtime monitoring have been precluded from sector-wide pilot programs in multiple jurisdictions.

What are the operational and economic benefits of runtime governance in AI workflows for financial use cases?

While runtime observability is ostensibly a compliance tool, it is delivering measurable ROI. Clients who implemented real-time monitoring in credit underwriting, fraud scoring, and wealth management workflows report reductions in false positives, unexplained decision variance, and manual review backlogs. A global insurer that added runtime containment reported a 25 percent decrease in customer escalation calls, while a bank using behavioral containment saw fraud detection improve by 18 percent during peak transaction periods.

The economic benefits extend beyond security teams. A bank leveraging real-time governance achieved a 30‑day acceleration in its AI transformation roadmap, having cleared compliance concerns and insurer checks ahead of schedule. These gains translate into faster time-to-market, lower legal risk reserves, and improved risk-adjusted return on capital—a performance vector closely tracked by institutional investors and equity research analysts covering tech-led financial innovation.

What should financial services firms anticipate for AI runtime tools and compliance frameworks through 2027?

Analysts forecast that by 2027, over 60 percent of Tier‑1 global banks will deploy runtime observability across at least two major AI workflows—typically fraud detection and customer servicing. Roadmaps from Palo Alto Networks, Microsoft, IBM, and CrowdStrike indicate plans to launch unified event graphs—capable of tracing prompt‑to‑action execution chains—and integrated audit dashboards that send runtime alerts to compliance teams as policy incidents.

Emerging runtimes will also support AI lineage reconciliation, aligning decisions with customer identities to support data subject rights requests and adverse action redress. Multi‑agent runtime collaboration is expected to be a next frontier, enabling oversight across coordinated AI services within the enterprise stack. These capabilities may underpin vendor ESG and compliance reporting, in addition to reinforcing cyber and operational resilience.

Could runtime observability become the new audit firewall for AI deployment in financial services?

There is a growing consensus that runtime observability may soon be viewed as the “audit firewall” for AI in regulated industries. Just as compliance teams would not consider deploying financial services software without embedded logging or access control, AI systems without real-time runtime controls may be deemed unfit for production. Institutional investors and large asset managers are already evaluating runtime observability as part of ESG and systemic risk assessments—signaling that this requirement will reach board-level attention in the next 12 months.

Adoption of runtime governance in financial AI workflows appears to be crossing a tipping point—driven by regulation, economics, and operational risk control. Financial services firms that fail to build this layer into their generative AI implementations may find themselves locked out of insurance, procurement, or even cross-border operating agreements.

Discover more from Business-News-Today.com

Subscribe to get the latest posts sent to your email.