IBM (NYSE: IBM) has officially unveiled its new z17 mainframe, powered by the Telum II processor and engineered to support the upcoming Spyre Accelerator. The new hardware platform extends IBM’s long-running mainframe lineage into an era of data-intensive and AI-assisted enterprise computing, focusing on performance, security, and workload efficiency across financial, public-sector, and industrial clients. The Spyre cards will be made commercially available in October 2025 for IBM Z and LinuxONE 5 systems, and in early December for IBM Power 11 servers.

The move signals a defining shift in how IBM integrates artificial intelligence within mission-critical environments. Instead of treating AI as an external workload, the z17 platform embeds inferencing capability directly into the core processor, while Spyre provides a scalable hardware layer to manage multi-model and low-latency AI applications. This combination marks one of IBM’s most consequential infrastructure evolutions in recent years, strengthening its long-term position in hybrid cloud and enterprise AI markets.

How does the Telum II processor transform on-chip AI performance in IBM’s z17 mainframe?

At the heart of IBM z17 lies the Telum II processor, an eight-core chip running at 5.5 GHz and purpose-built for AI-driven transactions. The processor incorporates a second-generation on-chip accelerator that delivers up to four times the inferencing performance of the previous generation. Its upgraded cache system, now featuring 40 percent more virtual cache and a 2.88 GB L4 cache, enables data to be accessed with minimal latency, directly improving real-time decision-making within enterprise workloads.

Telum II also debuts a coherently connected Data Processing Unit (DPU) that offloads I/O operations, allowing the processor to maintain throughput even under complex transaction volumes. This architecture lets each CPU core share access to any AI accelerator unit across the processor drawer, enabling uniform workload distribution and balancing under high concurrency. Internal testing has indicated that z17 systems can execute more than 450 billion inference operations per day while maintaining millisecond-scale response times—a key advantage for industries such as banking, insurance, and government data systems that demand immediate, data-driven actions.

The processor’s embedded AI unit supports both INT8 and FP16 data types, optimizing compute density and power efficiency. For enterprises, that means high-frequency transactional environments such as payment processing, logistics, or real-time fraud monitoring can now embed predictive AI directly at the data source rather than offloading to external GPUs or cloud systems.

What makes the Spyre accelerator a breakthrough in AI hardware for IBM Z, LinuxONE, and Power systems?

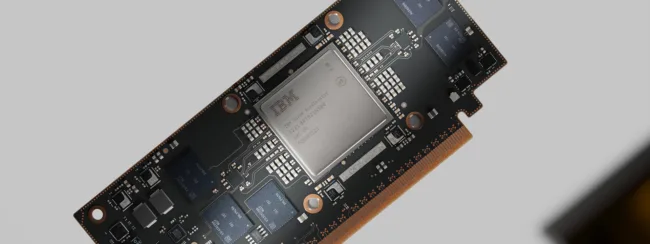

The Spyre Accelerator represents IBM’s most advanced step in enterprise AI hardware integration. Built using a 5 nanometer process, Spyre features 32 accelerator cores and more than 25 billion transistors, mounted on a 75-watt PCI Express card. Each card can be clustered—up to 48 in an IBM Z or LinuxONE system and 16 in a Power server—to create a scalable fabric of AI compute that can process multi-model workloads simultaneously.

Initially developed by the IBM Research AI Hardware Center, Spyre started as an experimental chip before evolving into a commercial platform through rapid iteration and real-world deployments at IBM’s Yorktown Heights campus and university research collaborations. Its commercialization underscores the depth of IBM’s research-to-product pipeline, transforming experimental AI chip concepts into enterprise-grade accelerators for mission-critical systems.

By allowing inferencing to occur within the same trusted computing boundary as IBM Z mainframes, Spyre eliminates the need for sensitive data transfers to external AI clusters. This on-premises model enables clients to integrate generative AI and agentic workloads while maintaining compliance, energy efficiency, and data privacy. The ability to cluster Spyre cards provides flexibility for institutions that want to scale from lightweight models to complex, high-parameter neural networks without changing infrastructure.

Why is on-premises AI acceleration critical for enterprises in 2025 and beyond?

In an environment where data privacy, latency, and sovereignty have become strategic differentiators, IBM’s hybrid approach positions it uniquely. An estimated 70 percent of global transaction value continues to pass through IBM Z mainframes, which power the backbone of industries such as finance, telecommunications, and government. These enterprises have traditionally relied on secure, isolated computing for sensitive workloads. By embedding AI acceleration directly into those systems, IBM is offering a way to modernize without compromising security.

For sectors like banking and insurance, where latency and accuracy directly impact compliance and profitability, AI-assisted inferencing can unlock predictive automation at scale. Use cases range from fraud detection and credit risk modeling to dynamic pricing and customer analytics. In government and defense systems, the integration of on-prem AI could improve anomaly detection, resource allocation, and public-service automation—all within controlled data environments.

Beyond the core benefits of low latency and privacy, Spyre and Telum II together also address sustainability goals. Both chips are optimized for performance per watt, allowing enterprises to reduce AI operational energy costs relative to equivalent GPU workloads. This could prove especially significant for data-center operators subject to tightening environmental reporting standards.

How do analysts interpret IBM’s strategy in the context of AI infrastructure competition?

Industry analysts view IBM’s z17 and Spyre combination as a bold repositioning of mainframes in the modern AI landscape. For decades, mainframes were perceived as legacy systems—stable but disconnected from emerging AI architectures dominated by GPU vendors like NVIDIA Corporation and AMD Inc. By integrating inferencing directly into the hardware stack, IBM is reframing the mainframe as a secure AI-ready platform that coexists with, rather than competes against, cloud-based AI infrastructure.

The move also plays into IBM’s long-term hybrid strategy, which combines watsonx, its enterprise AI platform, with its traditional systems portfolio. Institutional investors and technology observers have noted that IBM’s hardware-software co-design approach could strengthen its position with regulated clients who prefer keeping workloads on-premises while still accessing advanced model capabilities.

While the z17 and Spyre do not aim to replace GPU clusters in terms of raw teraflops, IBM’s differentiation lies in data proximity, transactional security, and system reliability. In sectors where uptime and compliance matter as much as computational speed, the ability to integrate generative AI directly into transactional workflows could prove decisive.

When will Spyre become available and what should enterprise clients prepare for next?

The IBM z17 mainframe is scheduled for general availability on June 18, 2025, equipped with Telum II processors. The Spyre Accelerator will follow shortly after, becoming available on October 28 for IBM Z and LinuxONE 5 systems and in early December for Power 11 servers. Early adopters of z17 can begin integrating on-chip AI today, while designing future-state architectures that expand with Spyre once PCIe cards ship later this year.

IBM’s roadmap suggests continued refinement of Spyre across system generations, with potential software optimizations to support more advanced AI models, including sparse and mixture-of-experts frameworks. The company is expected to align Spyre with its watsonx stack for enterprise deployment, providing clients with unified model governance, observability, and data integration.

Analysts believe that if IBM executes effectively—especially in compiler optimization, model support, and customer onboarding—it could transform perceptions of mainframes from legacy assets into essential AI infrastructure. Such positioning would not only defend IBM’s long-held market share in high-security computing but could also attract a new wave of enterprise clients seeking compliant, AI-enabled infrastructure at scale.

From a market sentiment perspective, IBM shares have traded steadily within the USD 190 to USD 200 range through early October 2025. Institutional investors interpret the z17 + Spyre announcements as a continuation of IBM’s steady margin expansion strategy, driven by hybrid cloud, AI, and infrastructure modernization. If adoption metrics align with expectations, sentiment could trend positive into 2026, with buy-side analysts forecasting moderate upside potential amid global enterprise AI spending growth.

In essence, the combination of Telum II and Spyre establishes IBM’s blueprint for AI-native mainframes—systems capable of running traditional transaction processing and generative AI inference side by side. It positions IBM not just as a hardware provider but as an end-to-end AI infrastructure partner, bridging the gap between legacy workloads and the data-driven future. For enterprises looking to deploy secure, scalable AI without surrendering data sovereignty, IBM’s latest evolution could become the defining model of how mainframes reinvent themselves for the generative AI age.

Discover more from Business-News-Today.com

Subscribe to get the latest posts sent to your email.