Super Micro Computer, Inc. (NASDAQ: SMCI), trading as Supermicro, has introduced a new suite of AI factory cluster solutions designed to help enterprises accelerate artificial intelligence deployment across industries. Announced on November 18, 2025, at the Supercomputing Conference in St. Louis, these new offerings are built on NVIDIA Enterprise Reference Architectures and powered by NVIDIA Blackwell GPUs. They feature full-stack integration, including compute, networking, and software, all validated and tested by Super Micro Computer, Inc. to reduce complexity, deployment timelines, and the need for multi-vendor coordination.

The solutions represent Super Micro Computer, Inc.’s latest move to capture demand from enterprises struggling with AI infrastructure rollout. By packaging compute nodes, storage systems, Ethernet networking, and NVIDIA’s software stack into scalable clusters that are plug-and-play out of the box, the company is positioning itself as a full-service provider in the evolving AI infrastructure market.

How does Supermicro’s AI factory architecture accelerate time-to-online for enterprises?

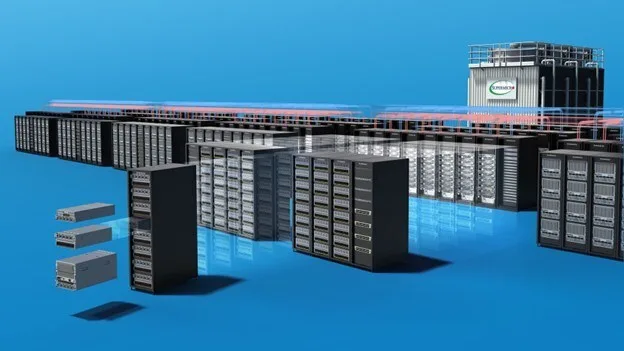

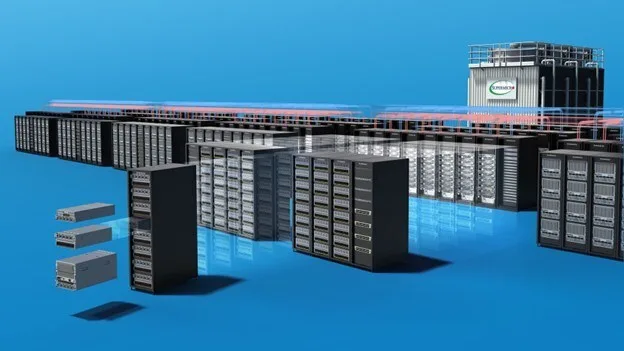

The cornerstone of the launch is the AI factory model, a term Super Micro Computer, Inc. uses to describe its modular, pre-validated cluster solutions. These systems are built around the company’s Data Center Building Block Solutions, also known as DCBBS, and come in small, medium, and large configurations. The smallest includes four compute nodes with 32 GPUs, while the largest scales to 32 nodes and 256 GPUs, supporting a wide range of AI workloads from low-latency inference to large-scale model training.

Unlike traditional data center builds, which can take weeks or months to plan, source, and assemble across different vendors, Super Micro Computer, Inc. claims that its AI factory clusters reduce time-to-online by integrating components and validating them up to Level 12, which includes full multi-rack testing. The systems are manufactured and tested at the company’s global production facilities, including its campus in San Jose, California.

Each AI factory cluster ships with NVIDIA AI Enterprise, NVIDIA Omniverse, and Run:ai orchestration tools, along with NVIDIA Spectrum-X Ethernet networking and complete cabling. The systems are delivered as turnkey infrastructure, allowing enterprises to power up and begin running workloads immediately.

What use cases are being targeted with the new NVIDIA Blackwell-powered configurations?

Super Micro Computer, Inc. has optimized the new cluster designs around two GPU configurations from NVIDIA: the RTX PRO 6000 Blackwell Server Edition and the HGX B200 platform. The former is intended for general-purpose AI inference, high-performance computing, and rendering workloads, while the latter is aimed at training large language models and running tightly-coupled GPU applications that require high-bandwidth interconnects via NVIDIA NVLink.

The RTX PRO 6000-based systems are built using Super Micro Computer, Inc.’s 4U and 5U PCIe GPU servers and come with eight GPUs per node. These systems are configured in a 2-8-5-200 architecture, referring to the CPU-GPU-NIC-bandwidth ratio, and are well-suited for enterprises seeking a common platform to support visual computing, simulation, and real-time analytics.

On the other hand, the HGX B200-based platforms are built using Super Micro Computer, Inc.’s 10U modular GPU enclosures, with each node containing eight NVIDIA GPUs interconnected via NVLink. These high-performance configurations are optimized for training multi-billion parameter models and can be deployed in academic research, large-scale digital twins, autonomous systems development, and enterprise-grade fine-tuning applications.

By addressing both generalist and specialist AI workloads, Super Micro Computer, Inc. is catering to a broad spectrum of enterprise buyers, from smaller AI labs to multinational corporations scaling production deployments.

What makes Supermicro’s infrastructure strategy distinct in the growing AI server market?

Super Micro Computer, Inc. has differentiated itself from traditional server vendors by focusing on fast time-to-market, modular data center solutions, and tight alignment with NVIDIA’s accelerator roadmap. The company was among the first to commercially ship NVIDIA HGX B200 and NVIDIA GB200 NVL72 platforms, and its latest AI factory product line continues this trend with Blackwell-based offerings ready for deployment at scale.

A key component of the strategy is vertical integration. Super Micro Computer, Inc. manufactures, assembles, validates, and ships these AI clusters under one roof, minimizing the risks of vendor fragmentation and compatibility issues. The company’s emphasis on spectrum-based networking through NVIDIA Spectrum-X Ethernet and its partnerships with software vendors further reduce the friction associated with launching enterprise-scale AI infrastructure.

These AI factory solutions are also aligned with the NVIDIA AI Data Platform reference design, allowing enterprises to integrate data pipelines, feature stores, model training environments, and inference endpoints within the same infrastructure footprint. The storage backbone offered by Super Micro Computer, Inc. supports the entire AI lifecycle, from data preprocessing to archival.

How is Supermicro’s stock performing amid growing AI infrastructure demand?

Super Micro Computer, Inc. has emerged as one of the top-performing stocks in the AI hardware and infrastructure space in 2025. Shares of the company have surged by more than 420 percent since the start of the year, supported by strong earnings, increasing enterprise adoption, and sustained demand for GPU-rich systems.

The launch of AI factory clusters is likely to reinforce bullish sentiment, especially among institutional investors who view the company as a leading beneficiary of the generative AI boom. Asset managers with heavy exposure to AI-related funds have increased their stakes in Super Micro Computer, Inc. in recent quarters, citing the firm’s superior integration model and early access to NVIDIA’s next-generation chips.

Brokerage firms have maintained positive ratings on the stock, with several labeling it a high-conviction AI infrastructure play. The broader market views Super Micro Computer, Inc. as a company with outsized leverage to AI growth without the heavy capital expenditures typically associated with semiconductor manufacturers.

What are enterprise buyers and market analysts watching as Supermicro scales its AI factory model?

Enterprise IT leaders are closely watching how the AI factory solutions perform in real-world production environments, especially in sectors like healthcare, manufacturing, finance, and telecom. The ability to scale GPU clusters with minimal integration effort is seen as a key driver for adoption.

Market analysts will be tracking the order backlog, gross margins on these integrated solutions, and expansion into newer geographies. There is also growing interest in how Super Micro Computer, Inc. plans to evolve the DCBBS ecosystem to support liquid cooling, power-efficient AI edge deployments, and sovereign cloud requirements for public sector clients.

Some institutional watchers are also monitoring whether Super Micro Computer, Inc. will expand its portfolio beyond NVIDIA-aligned products to include options that cater to other emerging accelerator vendors or proprietary inference engines. Diversification of its GPU partnerships could provide additional runway for growth in global markets where NVIDIA supply constraints may slow down deployment timelines.

Analysts expect continued demand for AI infrastructure well into 2026, and Super Micro Computer, Inc. appears well-positioned to capitalize on that trend, provided it can continue shipping complete systems at the scale and speed required by enterprise buyers.

Key takeaways: Supermicro’s AI factory cluster launch decoded

- Super Micro Computer, Inc. (NASDAQ: SMCI) has launched pre-integrated AI factory clusters built on NVIDIA Enterprise Reference Architectures and powered by NVIDIA Blackwell GPUs, targeting scalable enterprise deployment across industries.

- The new clusters come in small, medium, and large configurations ranging from 4 to 32 nodes and up to 256 GPUs, with full-stack integration including NVIDIA AI Enterprise, Omniverse, Spectrum-X Ethernet networking, and Run:ai orchestration.

- Supermicro’s Data Center Building Block Solutions (DCBBS) enable modular and rapid infrastructure build-outs, allowing enterprises to transition from legacy or greenfield environments to AI-ready operations with minimal integration effort.

- Two distinct GPU configurations are available: RTX PRO 6000 Blackwell Server Edition for inference and rendering workloads, and HGX B200 with NVLink for large-scale model training and AI research applications.

- The clusters are validated up to Level 12 (multi-rack), manufactured at Supermicro’s global facilities including San Jose, and delivered as plug-and-play solutions that reduce time-to-online.

- With year-to-date stock gains exceeding 420 percent, Super Micro Computer, Inc. has emerged as a leading AI infrastructure play among institutional investors, supported by strong demand for NVIDIA-aligned systems.

- Analysts and enterprise buyers are watching adoption trends, margin expansion, geographic penetration, and Supermicro’s ability to diversify beyond NVIDIA-based systems in future product cycles.

- The AI factory model reflects growing enterprise urgency to operationalize AI at scale, with infrastructure now viewed as a productivity multiplier in sectors like finance, healthcare, and manufacturing.

Discover more from Business-News-Today.com

Subscribe to get the latest posts sent to your email.